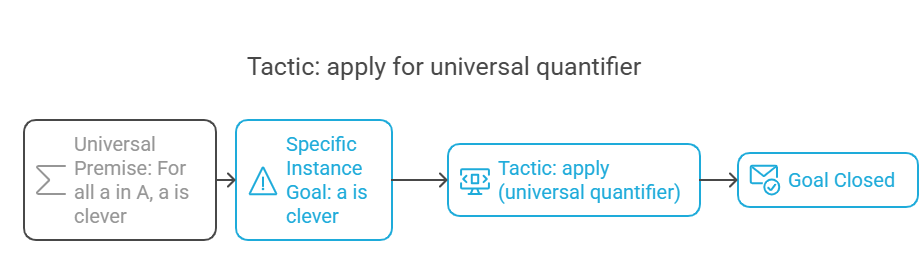

What Formal Reasoning Is For

Formal reasoning turns arguments into objects that can be checked. In COMP2067, the practical skill is reading a goal, reading the assumptions, and choosing the next proof move without Lean feedback.

Concept Map

The Exam Mindset

The final is handwritten. That changes the goal: you are not trying to memorize Lean output, you are training proof-state prediction. A proof state has a context above the line and a goal below it. Before each tactic step, ask what the context contains and what exact goal remains.

One Safe First Move

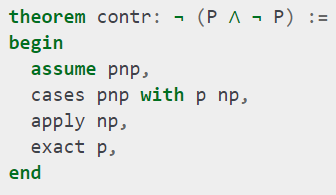

If the goal is an implication, use assume. If the goal is Q -> P, assume q : Q; the remaining goal becomes P, already available as p. If the goal instead matches a term in the context exactly, exact closes it.

variables P Q : Prop

example (p : P) : Q -> P :=

begin

assume q,

exact p,

endvariables P Q : Prop

example : P -> Q -> P :=

begin

assume p,

assume q,

exact p,

endLet P and Q be propositions.

We are proving: if P holds, then if Q holds, P still holds.

Start tactic mode.

Assume evidence for P; call it p.

Assume evidence for Q; call it q. It is not actually needed.

The goal is P, and p is exactly proof of P.

The proof is complete.

How to Read Any Proof State

- List the assumptions you already have. These are your tools.

- Read the goal shape. It tells you the next likely tactic.

- If the goal is an implication or universal statement, introduce it with

assume. - If the premise contains structure, unpack it before guessing a branch.

- Use

applywhen an implication can reduce the current goal to an earlier premise. - After each tactic, rewrite the new context and goal on paper.

- Do not write

sorryin an exam proof. It is a placeholder, not evidence.

Prop is the Lean universe of propositions. Later, Prop proofs and bool computations become related but not identical.The goal is P -> Q. What is the safest first tactic?

Crossword

Across

Down

Answer key

Propositional Proofs Without Panic

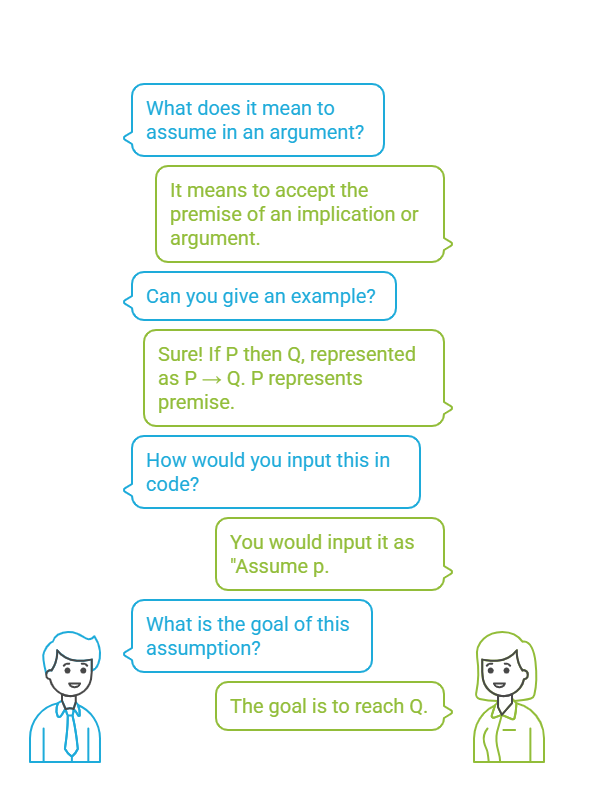

Propositional logic is the course's main tactic gym. Learn the shape: implication introduces, conjunction builds or unpacks, disjunction branches, and equivalence means two directions.

P -> QUse assume to introduce P, then prove Q.P /\ Q goalUse constructor, then prove both subgoals.P /\ Q premiseUse cases h with p q to extract both parts.P \/ Q goalUse left or right only when you know which side is provable.P \/ Q premiseUse cases and solve both branches.P <-> QUse constructor; prove each implication.Worked Trace: Swap a Conjunction

variables P Q R : Prop

theorem comAnd : P /\ Q -> Q /\ P :=

begin

assume pq,

cases pq with p q,

constructor,

exact q,

exact p,

endThe key move is not constructor first. The premise already contains evidence, so unpack it first. Once p : P and q : Q are visible, the goal Q /\ P is straightforward.

variables P Q R : Prop

theorem curry :

(P /\ Q -> R) <-> (P -> Q -> R) :=

begin

constructor,

assume pqr p q,

apply pqr,

constructor,

exact p,

exact q,

assume pqr pq,

cases pq with p q,

apply pqr,

exact p,

exact q,

endWe prove that taking a pair P /\ Q is equivalent to taking P and then Q separately.

constructor splits the equivalence into two directions.

First direction: assume a function from the pair to R, then assume separate evidence p and q.

apply pqr says: to prove R, it is enough to build P /\ Q.

constructor builds that pair from p and q.

Second direction: assume a function that takes P then Q, and assume a pair pq.

cases pq opens the pair into separate evidence.

Now apply the curried function to p and q.

Truth Table Discipline

- Use the required binary row order, such as

00,01,10,11. - Show intermediate columns, especially for nested implications.

- Parse implication as right-associative:

P -> Q -> RmeansP -> (Q -> R). - Call the final column: all

1is a tautology, all0is a contradiction.

The Four Propositional Moves

Implication turns a goal into a new assumption. Conjunction is either a pair to build or a pair to unpack. Disjunction is either a branch you choose with left/right or a branch split you must handle with cases. Equivalence is two implications, so it almost always starts with constructor.

For (P -> Q) \/ (P -> R) -> P -> Q \/ R, after assuming the premises, what should you split?

Crossword

Across

Down

Answer key

When Constructive Evidence Runs Out

Classical reasoning is not a random extra trick. It is used when the proof needs a case split that the current evidence does not constructively provide.

Key Syntax

variables P : Prop

open classical

example : P \/ not P :=

begin

cases em P with p np,

left,

exact p,

right,

exact np,

endThis creates two branches: one where p : P, and one where np : not P.

The Evidence Problem

From not (P /\ Q), you know the pair cannot exist. You do not yet know whether P failed, whether Q failed, or both. That is why the direction not (P /\ Q) -> not P \/ not Q needs classical help in the course treatment.

Classical De Morgan Pattern

variables P Q : Prop

open classical

theorem dm2_em :

not (P /\ Q) -> not P \/ not Q :=

begin

assume npq,

cases em P with p np,

right,

assume q,

apply npq,

constructor,

exact p,

exact q,

left,

exact np,

endvariables P Q : Prop

open classical

theorem dm2_em :

not (P /\ Q) -> not P \/ not Q :=

begin

assume npq,

cases em P with p np,

right,

assume q,

apply npq,

constructor,

exact p,

exact q,

left,

exact np,

endAssume it is impossible for both P and Q to hold.

Classically split on whether P holds.

If P holds, we cannot prove not P, so choose the right side: not Q.

To prove not Q, assume q : Q and derive a contradiction.

The contradiction is that P /\ Q can now be built from p and q, violating npq.

If P does not hold, choose the left side and give np directly.

P \/ not P. Use when a proof needs a case split.P, show that not P leads to impossibility.not not P -> P is a classical principle.What to Know, Not Overdo

The exam-relevant point is not philosophy for its own sake. You need to know why constructive evidence is stronger, why intuitionistic logic avoids using EM automatically, why EM gives a missing case split, and how RAA changes a goal into a double-negation goal. Do not treat classical tactics as magic; write the branch reason beside the proof.

Why does not (P /\ Q) -> not P \/ not Q need classical reasoning in this course?

Crossword

Across

Down

Answer key

Predicates, Quantifiers, and Equality

Predicate logic adds objects. That means the proof is no longer only about whether P or Q holds; it is about which object the evidence is about.

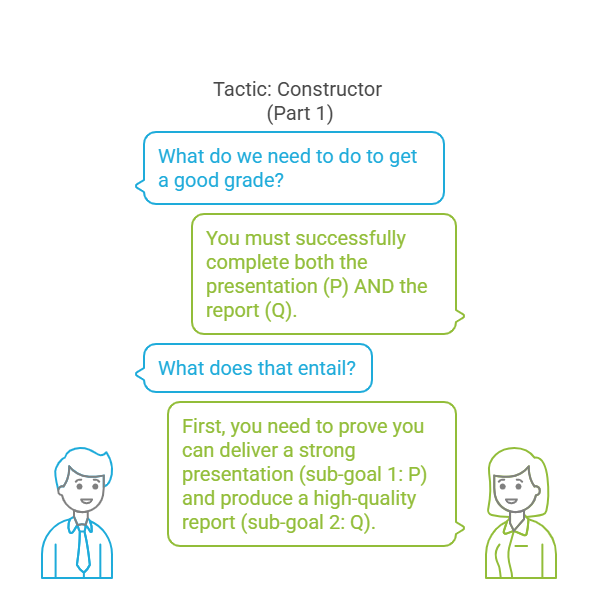

Universal Chaining

variables A : Type

variables PP QQ : A -> Prop

example : (forall x : A, PP x) ->

(forall y : A, PP y -> QQ y) ->

forall z : A, QQ z :=

begin

assume p pq a,

apply pq,

apply p,

endExistential Chaining

variables A : Type

variables PP QQ : A -> Prop

example : (exists x : A, PP x) ->

(forall y : A, PP y -> QQ y) ->

exists z : A, QQ z :=

begin

assume p pq,

cases p with a pa,

existsi a,

apply pq,

exact pa,

endvariables A : Type

variables PP QQ : A -> Prop

example : (exists x : A, PP x) ->

(forall y : A, PP y -> QQ y) ->

exists z : A, QQ z :=

begin

assume p pq,

cases p with a pa,

existsi a,

apply pq,

exact pa,

endIf some object has property PP, and every PP object has property QQ, then some object has property QQ.

Assume the existential evidence and the universal rule.

Open the existential evidence: it gives a real witness a and proof pa : PP a.

Use the same witness a for the new existential goal.

Apply the universal rule pq; now it is enough to prove PP a.

pa is exactly that proof.

forallAssume a generic object.forallApply it to the object in front of you.existsUse existsi with a witness that makes the goal true.existsUse cases h with a ha to reveal the witness and proof.rewrite h, rewrite <- h, or rewrite h at hx.cases em (PP a) with h hnot.Witness Rule

Do not guess existential witnesses just because existsi is available. If an existential premise exists, unpack it first. The witness that came with evidence is usually the one the proof needs.

A predicate is a property of one object, such as PP x. A relation is a predicate with multiple inputs, such as RR x y. Quantifiers decide how objects enter the proof: forall gives a reusable rule, while exists gives one witness and its evidence.

For De Morgan with predicates, keep the two patterns separate: not (exists x, PP x) <-> forall x, not (PP x) is the constructive equivalence emphasized in the slides; not (forall x, PP x) <-> exists x, not (PP x) is the classical pattern.

variables A : Type

variables PP : A -> Prop

example : forall x y : A,

x = y -> PP x -> PP y :=

begin

assume x y eq hx,

rewrite eq at hx,

exact hx,

endFor any two objects x and y, if they are equal and PP x holds, then PP y holds.

Introduce the objects, equality evidence, and predicate evidence.

Rewrite inside the hypothesis hx, changing evidence about x into evidence about y.

Now hx has the exact target shape.

Equality as Transport

Equality is itself a proposition. When you use rewrite, you transport evidence across that equality. Congruence is the related idea that equal inputs stay equal after applying the same function, which is why examples such as congr_arg nat.succ matter in natural-number proofs.

What does cases h with a ha do when h : exists x : A, PP x?

Crossword

Across

Down

Answer key

Booleans and Naturals as Machines

Booleans and natural numbers are not vague built-ins in the lecture story. They are inductive datatypes, and their functions reduce by following constructor patterns.

Constructor View

inductive lecture_bool : Type

| tt : lecture_bool

| ff : lecture_bool

inductive lecture_nat : Type

| zero : lecture_nat

| succ : lecture_nat -> lecture_natA constructor is a named way to build a value of an inductive type. The snippets use lecture-prefixed names so the example is copyable without colliding with Lean's built-in bool and nat. In the course material, the real constructors are tt, ff, 0, and nat.succ.

Boolean Definition From Rows

Fix the first Boolean input. If the row pattern says "return the second input", write | tt b := b. If it says "always true", write | ff b := tt.

def bimp : bool -> bool -> bool

| tt b := b

| ff b := ttNatural Reduction Trace

For course-style addition, recursion follows the second argument. Reduction means unfolding the matching equation until constructors remain:

def add : ℕ -> ℕ -> ℕ

| m 0 := m

| m (nat.succ n) := nat.succ (add m n)So reduce by stripping successors from the second input until the base case appears.

def bimp : bool -> bool -> bool

| tt b := b

| ff b := tt

#reduce tt && (tt || ff)

#reduce ff && (tt || ff)Boolean implication is a function taking two Boolean inputs.

If the first input is true, implication returns the second input.

If the first input is false, implication is true regardless of the second input.

Reduction questions ask you to unfold definitions until only constructors remain.

The first reduction is true; the second is false because false-and-anything is false.

cases xOften solves Boolean equalities by checking tt and ff.is_ttBridge from a Boolean value to a proposition.dsimpUnfold a definition when Lean needs help exposing the computation during a reduction trace.succConstructor that builds the next natural number.zero is not equal to succ n.double, half, add, mul, ble; induction proofs are background unless the question asks for them.Boolean Definitions Worth Knowing

def bxor : bool -> bool -> bool

| tt b := bnot b

| ff b := b

def biff : bool -> bool -> bool

| tt b := b

| ff b := bnot bbxor flips the second input when the first input is true. biff keeps the second input when the first input is true and flips it when the first input is false.

Boolean-to-Proposition Bridge

&&, ||, and bnot compute on Boolean data. /\, \/, and not combine propositions. The lecture bridge is is_tt b, meaning the Boolean value b is true as a proposition.

def double : ℕ -> ℕ

| 0 := 0

| (nat.succ n) := nat.succ (nat.succ (double n))

#reduce double 2 -- 4The base case says double of zero is zero.

The successor case says: remove one successor, recursively double the smaller number, then add two successors back.

For two, strip one successor and add two around the recursive call.

Strip the second successor and add two more.

Hit zero, then rebuild outward. The result is four.

Revision Functions: Unknown and UnknownII

The revision deck uses small recursive functions to test whether you can read constructor equations. unknown behaves like maximum: unknown 0 2 reduces to 2, and unknown 5 3 reduces to 5. unknownII adds two successors in the recursive case, so the checked examples unknownII 5 3 and unknownII 3 5 both reduce to 8.

def unknown : ℕ -> ℕ -> ℕ

| a 0 := a

| 0 b := b

| (nat.succ a) (nat.succ b) := nat.succ (unknown a b)

def unknownII : ℕ -> ℕ -> ℕ

| a 0 := a

| 0 b := b

| (nat.succ a) (nat.succ b) := nat.succ (nat.succ (unknownII a b))What is the best description of && versus /\?

Crossword

Across

Down

Answer key

Lists, Trees, and Exam Practice

Lists and trees complete the datatype arc. The exam-facing skill is not proving an industrial sorting algorithm; it is understanding constructors, no-confusion, injectivity, insertion, and traversal.

List Shape

#check (@list.nil)

#check (@list.cons)[1,2,3] is shorthand for 1 :: 2 :: 3 :: []. If a :: l = b :: m, then injection gives equality of heads and equality of tails. No-confusion says nil cannot equal a cons list.

Tree Shape

inductive Tree : Type

| leaf : Tree

| node : Tree -> ℕ -> Tree -> TreeTree sort builds a binary-search tree, then reads it with in-order traversal: left subtree, root, right subtree.

example : [1, 2, 3] = 1 :: 2 :: 3 :: [] :=

begin

refl,

endLean infers this as a list of natural numbers.

[] is notation for the empty list, list.nil.

:: is notation for list.cons: it puts one element at the front of an existing list.

Bracket notation is convenience syntax for repeated cons.

refl works because both sides reduce to the same constructor structure.

Tree Sort Trace

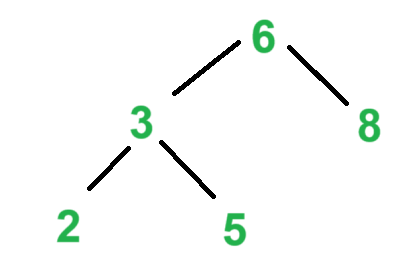

[6, 3, 8, 2, 5].In-order traversal of that tree gives 2, 3, 5, 6, 8, matching the retained lecture example.

Insertion Example

The revision notes keep the list-insertion example ins 5 [6, 4, 2]. With the retained comparator, it reduces to [6, 5, 4, 2]. The exam skill is to trace one comparison at a time, not to recite the whole sorting algorithm.

What Can Be Tested

No-confusion and injectivity matter. [] cannot equal a :: l, and a :: l = b :: m gives both a = b and l = m. The notes say the injection tactic itself is not the exam target, but the injective property is still fair game.

insert [6, 3, 8, 2, 5]

6 becomes the root

3 goes left of 6

8 goes right of 6

2 goes left of 3

5 goes right of 3

in-order: 2, 3, 5, 6, 8Tree sort has two phases: build the tree with repeated insertion, then traverse it.

The first item anchors the structure.

Smaller values move left; larger or equal values move right.

The tree stores comparison decisions, not the original order.

Reading left-root-right recovers sorted order.

Closed-Book Mini Paper

- Truth table: parse

P -> Q -> P, write rows in the required binary order. - Prove

P /\ Q -> Q /\ Pin Lean tactic style. - Use EM to explain the hard De Morgan direction.

- Translate a universal English statement into Lean predicates.

- Define Boolean implication from a truth table.

- Trace insertion of one element into a list, then draw one tree-sort example.

Which traversal gives sorted output from the binary-search tree in the course examples?

Crossword

Across

Down

Answer key

Lean Playground

Practice Lean 3 proof-state prediction in two modes: normal feedback when you are learning, and delayed feedback when you are simulating the handwritten exam.

Session Status

Loading the lightweight playground shell. Lean, SQLite, and Sher load only when needed.

Foundations: Alphabets, Words, and Languages

A formal language begins with a finite alphabet, builds finite words, and then studies sets of those words with operations such as concatenation and Kleene star.

Core Notation

Sigma = {0,1}

epsilon in Sigma*

001 in { w in Sigma* | w ends in 1 }

Learning Targets

- Distinguish symbols, words, alphabets, and languages.

- Use

epsilon,Sigma*, membership, and length notation accurately. - Compute language operations: concatenation, union, and Kleene star.

Concept Explanation

An alphabet, usually denoted by Σ, is a finite set of symbols. A word is a finite sequence of symbols from that alphabet. The empty word epsilon has length 0 and is still a word. Sigma* is the set of all finite words over Sigma, including epsilon.

A language is any subset of Sigma*. For Sigma = {0,1}, the set of words with an even number of 1s is a language, and the question 0101 in L? is a membership question.

Worked Trace: Concatenation and Star

- Let

A = {0, 11}andB = {epsilon, 1}. AB = {0epsilon, 01, 11epsilon, 111}.- Simplify with

xepsilon = x:AB = {0, 01, 11, 111}. A*contains any finite concatenation of words fromA:epsilon,0,11,00,011,110, and so on.

Sigma = {a,b}

Sigma* = {epsilon, a, b, aa, ab, ba, bb, ...}

L = { w in Sigma* | w starts with a }

ab in L

epsilon notin LThe usable symbols are a and b.

All finite strings over those symbols form Sigma*.

The language L keeps exactly the words whose first symbol is a.

ab is accepted by that description.

epsilon has no first symbol, so it is not in L.

Misconception Check

epsilon is not the empty set. It is one word of length 0. A language can contain epsilon, contain no words at all, or contain infinitely many words. Keep the word epsilon separate from the language {epsilon} and the empty language {}.

If Sigma = {0,1}, which statement is always true?

Crossword

Across

Down

Answer key

Finite Automata: DFA, NFA, and Lexers

Finite automata recognize regular languages by moving between finitely many states. DFAs make one forced move; NFAs can branch, and subset construction turns those branches into DFA states.

Tuple View

DFA = (Q, Sigma, delta, q0, F)

NFA = (Q, Sigma, delta, S, F)

In the COMP2040 notation, an NFA has a set of start states S subset Q, and delta returns a set of possible next states.

Learning Targets

- Read DFA and NFA transition diagrams as formal transition functions.

- Trace input using extended transitions and NFA marker sets.

- Explain why subset construction proves DFA/NFA equivalence for regular languages.

Concept Explanation

A DFA has exactly one current state. After reading the whole word, it accepts if that state is in F. The extended transition delta-hat(q,w) means "start at q and consume the whole word w".

An NFA may have many possible current states, beginning from S subset Q. It accepts if at least one possible path consumes the whole word and ends in a final state. Subset construction makes a DFA whose states are sets of NFA states.

Worked Trace: NFA Markers

- Start marker set:

{q0}. - Read

a: move all markers alonga-arrows, giving{q0,q1}. - Read

b: move from every marked state onb, giving{q2}. - If

q2 in F, the wordabis accepted.

while or >=.S subset Q, not just one q0.NFA state set after w: S

on symbol a:

S' = union { delta(q,a) | q in S }

DFA transition:

Delta(S,a) = S'A DFA state in the constructed machine is a set of NFA states.

To read one symbol, look at every current NFA marker.

Collect every destination reachable on that symbol.

That collected set is the next DFA state.

A constructed DFA state is accepting when it contains at least one accepting NFA state.

Misconception Check

Nondeterminism is not guessing one path and hoping. The formal trace keeps all possible current states at once. The NFA accepts only when at least one complete path reaches a final state after the whole input has been consumed.

In subset construction, what does one constructed DFA state represent?

Crossword

Across

Down

Answer key

Regular Languages: Regex, Minimisation, and Pumping

Regular expressions describe exactly the regular languages. Automata constructions show how to recognize them, while minimisation and the pumping lemma reveal structure and limits.

Precedence

* binds tightest.

Concatenation binds next.

+ or union binds loosest.

Learning Targets

- Parse regular expressions using precedence and parentheses.

- Explain the NFA constructions for union, concatenation, and star.

- Use table filling for DFA minimisation and pumping lemma reasoning for non-regularity.

Concept Explanation

Regular expressions are algebraic descriptions of languages. a denotes the singleton language {a}, r+s denotes union, rs denotes concatenation, and r* denotes zero or more repetitions.

Every regular expression can be converted to an NFA, and every DFA/NFA language can be described by a regular expression. Minimisation removes redundant DFA states; the pumping lemma helps prove that some languages cannot be regular.

Worked Trace: Pumping 0n1n

- Assume for contradiction that L = {0n1n | n ≥ 0} is regular.

- Let

pbe the pumping length and choose w = 0p1p. - For any split

w = xyzwith|xy| <= pand|y| > 0, the substringycontains only0s. - Pump down to

xz. It has fewer0s than1s, soxz notin L. - This contradicts the pumping lemma, so

Lis not regular.

(0+1)*11

= all binary words ending in 11

0(0+1)* + epsilon

= epsilon or words beginning with 0(0+1)* means any finite binary prefix.

Appending 11 forces the final two symbols to be 11.

0(0+1)* means a first symbol 0, followed by any binary suffix.

Union with epsilon also accepts the empty word.

Misconception Check

The pumping lemma is a one-way necessary condition for regular languages. Passing a pumping-style test does not prove a language regular. To prove regularity, give a regex, DFA, NFA, or closure argument.

With standard precedence, what does ab* mean?

Crossword

Across

Down

Answer key

Pushdown Automata: Stack Traces and Acceptance

A PDA extends finite automata with a stack. That extra memory recognizes context-free patterns such as balanced structure, palindromes, and 0n1n.

Transition Shape

(q, a, X) -> (p, gamma)

In state q, read input a or epsilon, pop X, push gamma, and move to p.

Gamma is the stack alphabet; lowercase gamma is the string of stack symbols pushed by a transition.

Learning Targets

- Read PDA transition notation and update stack contents correctly.

- Trace instantaneous descriptions using input remainder and stack contents.

- Compare final-state acceptance with empty-stack acceptance.

Concept Explanation

An instantaneous description records the machine state, unread input, and current stack. A PDA can use epsilon moves and nondeterministic choices, so a trace may branch. The stack is last-in-first-out memory, which is why it suits nested or mirrored structure.

Final-state acceptance means the input is consumed and the current state is final. Empty-stack acceptance means the input is consumed and the stack has been emptied. Standard constructions can convert between these acceptance styles, but exam traces must follow the requested mode.

Worked Trace: 0n1n

- Start with input

0011and bottom stack markerZ. - Read first

0, pushX: stackXZ. - Read second

0, pushX: stackXXZ. - Switch to the pop phase and read first

1, pop oneX: stackXZ. - Read second

1, pop oneX: stackZ. - Input is consumed and the count matched, so the accepting move is available.

for each 0 before the midpoint:

push X

for each 1 after the midpoint:

pop X

accept if input is done and markers matchThe stack remembers how many 0s were seen.

The state records whether the machine is still pushing or has started popping.

Each 1 must remove exactly one marker.

Extra 0s, extra 1s, or a wrong order make the trace fail.

(q, 011, XZ) means state q, unread input 011, and stack XZ.Misconception Check

The stack is not random access memory. A PDA only sees the top stack symbol. This is enough for nested and mirrored patterns, but not for arbitrary comparison unless the language has a context-free structure the stack can exploit.

What does (q, a, X) -> (p, gamma) say?

Crossword

Across

Down

Answer key

CFGs and Empty-Stack PDAs

Context-free grammars generate languages by rewriting variables. Pushdown automata recognize the same class by using a stack to remember unfinished structure.

Learning Targets

Concept Explanation

A CFG is a tuple G = (V, Sigma, R, S). V is the finite set of nonterminals, Sigma is the alphabet of terminals, R is the finite set of productions, and S is the start symbol. A production such as A -> alpha replaces one nonterminal with a string of terminals and nonterminals.

A sentential form is any string reachable from S during derivation. The final word is reached when no nonterminals remain. A leftmost derivation always rewrites the leftmost remaining nonterminal; a rightmost derivation always rewrites the rightmost one.

Worked Derivation

G:

S -> a S b | epsilon

Leftmost derivation of aabb:

S

=> a S b

=> a a S b b

=> a a epsilon b b

= aabbThe intermediate strings S, a S b, and a a S b b are sentential forms. The completed terminal string aabb belongs to the generated language.

start with S on the stack

if top is A, choose A -> alpha

replace A by alpha on the stack

if top is terminal a and input has a, pop and read a

accept when input is consumed and stack is emptyThe PDA simulates a derivation with its stack.

A nonterminal on the stack is a promise to generate part of the input.

A production expands that promise.

A terminal on the stack must match the next input symbol.

Empty stack means every promised symbol has been matched.

Trace Pattern

- Push the start symbol

S. - Use epsilon moves to expand nonterminals by grammar productions.

- Use input-consuming moves only when the top stack symbol is the same terminal.

- Keep the stack order consistent: the next symbol to be matched must be on top.

- Accept by empty stack after all input has been read.

In S => a S b => a a S b b, what is a a S b b?

Crossword

Across

Down

Answer key

Determinism and Ambiguity

A DPDA has no real choice at any instant. Ambiguity is different: it is a grammar property where the same string has more than one parse tree or leftmost derivation.

Learning Targets

Concept Explanation

A deterministic PDA has at most one valid move for a given state, input situation, and stack top. The important exam trap is epsilon/input competition: if an epsilon transition is available for a state and stack top, an input-consuming transition for the same state and stack top cannot also be available.

The delimiter language { w $ wR | w in {0,1}* } is DPDA-friendly because $ tells the machine exactly when to stop pushing and start popping. Without a delimiter, the midpoint must be guessed, which needs nondeterminism.

Worked DPDA Trace

Input: 01$10

Before $: push symbols

read 0, stack: 0 Z

read 1, stack: 1 0 Z

read $, switch to matching

read 1, pop 1

read 0, pop 0

accept when input is done and stack returns to ZThe delimiter removes the ambiguous control decision. The stack stores w; the second half must match it in reverse order.

E -> E + E

E -> E * E

E -> id

string: id + id * id

parse 1: (id + id) * id

parse 2: id + (id * id)The same terminal string can be generated with different tree shapes.

One tree makes plus happen before multiplication.

The other tree makes multiplication happen before plus.

That is ambiguity, even if one parse is the intended programming-language meaning.

A grammar can be rewritten to encode precedence and associativity.

Derivation-Tree Test

- Pick one target string, such as

id + id * id. - Build one derivation tree where the root operator is

*. - Build another derivation tree where the root operator is

+. - If both trees yield the same leaf sequence, the grammar is ambiguous.

- Do not confuse grammar ambiguity with machine nondeterminism; they are related topics, not the same property.

$ symbol is not decoration; it carries the information that makes the midpoint deterministic.Which situation violates DPDA determinism?

Crossword

Across

Down

Answer key

Turing Machines and the Hierarchy

Turing machines extend finite control with an unbounded tape. The Chomsky hierarchy organizes grammar restrictions, automata models, and the language classes they define.

Learning Targets

Concept Explanation

The hierarchy moves from regular languages to context-free languages, context-sensitive languages, recursive languages, and recursively enumerable languages. A context-sensitive grammar uses non-contracting productions: the right side is at least as long as the left side, except for the usual special start-symbol S -> epsilon caveat.

Unrestricted grammars remove that length restriction and match the power of Turing-machine recognizable languages. A Turing machine is commonly given as (Q, Sigma, Gamma, delta, q0, B, F), with input alphabet Sigma, tape alphabet Gamma, blank B, transition function delta, start state q0, and accepting states F.

Worked TM Trace

Language idea: 0ⁿ1ⁿ

Input: 0011

1. Mark the leftmost unmarked 0 as X.

2. Move right to the leftmost unmarked 1 and mark it Y.

3. Return left to find the next unmarked 0.

4. Repeat until no unmarked 0 remains.

5. Accept if only X and Y markers remain.The markers store which symbols have already been paired. The finite state controls the scan direction and detects malformed order, such as a remaining 0 after marked 1s.

delta(q, 0) = (p, X, R)

current ID: u q 0 v

write X over 0

move one square right

enter state p

next ID: u X p vThe machine reads the current tape symbol under the head.

The transition chooses a new state, replacement symbol, and movement direction.

The tape persists, so writing a marker changes future scans.

An instantaneous description records the tape content plus the current state position.

A computation is a sequence of such IDs.

Hierarchy Checklist

- Regular languages are handled by finite automata and regular grammars.

- Context-free languages are handled by CFGs and nondeterministic PDAs.

- Context-sensitive languages use non-contracting grammars and bounded tape intuition.

- Recursive languages are decided by TMs that halt on every input.

- Recursively enumerable languages are recognized by TMs that may loop on nonmembers.

B belongs to the tape alphabet, not the input alphabet in the usual setup.In the TM strategy for 0n1n, what are X and Y used for?

Crossword

Across

Down

Answer key

Decidability and Exam Synthesis

The final layer connects recognizers, deciders, halting, lambda calculus, P versus NP, and the lecture-derived exam map into one revision structure.

Learning Targets

Concept Explanation

A recognizer accepts strings in the language but may loop forever on strings outside it. A decider always halts, accepting members and rejecting nonmembers. Recursive languages are decidable; recursively enumerable languages are recognizable. Every recursive language is RE, but not every RE language is recursive.

The halting problem asks whether there is a general algorithm that decides whether an arbitrary program halts on an arbitrary input. The standard result is negative, so it marks a boundary on what computation can decide.

Worked Exam Map

Earlier course:

alphabet -> DFA/NFA -> regex -> pumping

Middle course:

PDA -> CFG -> DPDA -> ambiguity

Final course:

CSG -> TM -> recognizer/decider -> halting

lambda calculus -> Church-Turing idea

P vs NP -> Partition as an NP exampleTranscript-derived Lecture 11 guidance: the revision cues point to synthesis: know definitions, trace small machines, and explain limits with concise examples rather than long formal proofs. The short Lecture 11 slide source confirms only the exam-guidance/revision session structure.

lambda x. x

identity function

P: efficiently solvable

NP: efficiently verifiable

Partition: split numbers into equal-sum groups?

Halting: no universal deciderlambda x. x returns its input unchanged.

Lambda calculus is a minimal model of function-based computation.

P asks for efficient algorithms that solve problems.

NP asks whether proposed solutions can be checked efficiently.

The halting result shows that some yes/no questions cannot be decided by any general algorithm.

Revision Drill

- Define

Sigma*, language, DFA, NFA, regex, PDA, CFG, DPDA, TM. - For each model, name the extra memory it has: none, stack, or tape.

- Practice one trace each: DFA/NFA, PDA stack, CFG derivation, TM tape.

- Explain recognizer versus decider without using circular wording.

- Use Partition to remember the P versus NP distinction: solving versus checking.

Past Exam Practice

Loading mixed COMP2040 past-paper questions...

What is the main difference between a recognizer and a decider?

Crossword

Across

Down

Answer key

Automata Lab: Trace, Branch, Verify

Use the same client-side solver for DFA, NFA, PDA, and Turing-machine demos plus the reconstructed past-paper diagrams. The runtime traces each input, animates the current configuration, and reports what was verified locally in the browser.

Learning Targets

- Trace one input word through deterministic, nondeterministic, stack, and tape-based machines.

- Recreate past-paper diagrams from structured graph and transition definitions.

- See which configuration changes after each transition: state, marker set, stack, tape head, or tape contents.

- Check acceptance conditions without hiding the intermediate configurations.

Artificial Intelligence Methods: Search, Models, and Heuristics

This course is mostly about choosing a model, making a search space, and explaining why a method is sensible when exact search is too expensive.

Answer checklist

problem, representation, objective, move, search rule, limitation

When an answer starts sounding general, turn it into these six concrete choices. The method becomes much easier to mark because the object, score, move rule, and limit are all visible.

Learning Targets

- Separate a general problem from a concrete instance.

- Explain when exact methods, heuristics, local search, and stochastic methods fit.

- Write the six-part search setup examiners keep asking for.

Concept Explanation

A heuristic is not magic. It is a trade: give up a guarantee, gain a route through a huge search space. That trade is useful for TSP, bin packing, MAX-SAT, timetabling, and other problems where enumerating every solution is not realistic.

In exam writing, define the representation, the objective function, and the neighbourhood. Without those three, the search method has nowhere concrete to operate.

Worked Example: TSP as a Search Problem

- Representation: a permutation of cities, such as

A-B-C-D-A. - Initial solution: nearest-neighbour tour, random tour, or a given starting route.

- Evaluation: total route distance, usually minimised.

- Neighbour: swap two cities or reverse a segment with a 2-opt move.

- Search: hill climbing, simulated annealing, tabu search, GA, or a hyper-heuristic.

- Caveat: a local best route may still be far from global optimum.

candidate = representation(instance)

score = objective(candidate)

while time remains:

candidate = choose(neighbour(candidate))

return best candidate seenChoose a way to write down a solution.

Score it with a rule linked to the problem goal.

Move to nearby candidates and keep the best answer seen.

State what the method cannot guarantee.

What makes a solution a local optimum?

Key Terms Crossword

Core vocabulary for short-definition marks.

Across

Down

Local Search and Hill Climbing

Local search is simple enough to trace by hand, which is why it is exam-friendly. The marks usually sit in the setup and in the move-by-move explanation.

Six components

problem, representation, initialisation, evaluation, neighbourhood, search strategy

Use this list before tracing any local search question.

Learning Targets

- Trace first-improvement and best-improvement hill climbing.

- Explain plateaus, ridges, and local optima without overclaiming.

- Instantiate the component checklist for MAX-SAT, TSP, bin packing, or knapsack.

Concept Explanation

Hill climbing keeps a current solution and moves to a better neighbour. Best improvement checks the neighbourhood and takes the best improving move. First improvement takes the first improving move it finds. Both can stop at a local optimum even when a better solution exists elsewhere.

For MAX-SAT, a common representation is a bit string assigning truth values. A neighbour flips one bit. Some descriptions maximise satisfied clauses; the lecture traces often minimise unsatisfied clauses. Say which convention you are using, then show the assignment, the changed bit, and the new score.

One bit flip changes the unsatisfied count

The yellow move flips the fourth bit from 1 to 0. It makes the answer better here: 101000 has zero unsatisfied clauses.

101100 flip bit 4: 1 -> 0 gives 101000Component Checklist Examples

- MAX-SAT: representation is a bit string; evaluation is satisfied or unsatisfied clauses; neighbourhood is usually one-bit flip.

- TSP: representation is a city permutation; evaluation is tour length; neighbourhood might swap two cities or use 2-opt.

- Bin packing: representation assigns items to bins; evaluation can count bins or unused capacity; a move may shift an item to another bin.

- Knapsack: representation is a binary include/exclude string; evaluation is value with a capacity constraint; a move flips one item's inclusion.

- Exam habit: write representation, initial solution, evaluation, neighbourhood, search rule, and stopping condition before tracing moves.

Worked Trace: One-Bit MAX-SAT Move

- Current assignment:

s = 101100; lecture trace usesf(s)as unsatisfied clauses, sof(s)=1. - Neighbour

101000flips one bit and hasf=0. - Neighbours such as

111100,101110, and101101havef=1. 001100is marked tabu in the lecture trace, so Tabu Search would not take it even if it were considered.- The best non-tabu improving move is

101000because zero unsatisfied clauses is better than one. - If no non-tabu neighbour improved the unsatisfied count, a plain improving search would stop or a metaheuristic would use its escape rule.

current = initial_solution()

while improving neighbour exists:

next = choose_improving_neighbour(current)

current = next

return currentState the current score before considering moves.

Show how each candidate neighbour is created.

Choose according to first or best improvement.

Name the stopping reason, not just the final answer.

What distinguishes best-improvement hill climbing?

Local Search Crossword

Terms that make trace answers precise.

Across

Down

Metaheuristics: ILS, Tabu Search, and Simulated Annealing

Metaheuristics add control logic around local search: memory, randomisation, perturbation, or move acceptance that can step away from a tempting dead end.

SA formula

P(accept worse move) = exp(-Delta / T)

For minimisation, Delta is the increase in cost. Lower temperature makes worse moves less likely.

Learning Targets

- Explain exploration and exploitation using concrete search behaviour.

- Trace simulated annealing acceptance for a worse move.

- Compare ILS, Tabu Search, and SA as escape mechanisms.

- Recognise the named move-acceptance and Tabu Search details that appear in the slides.

Concept Explanation

Iterated local search repeatedly improves a solution, perturbs it, then runs local search again. Tabu Search uses memory so recent moves or attributes are temporarily forbidden. Simulated annealing sometimes accepts worse moves, especially early when the temperature is high.

Do not define a metaheuristic as "a better heuristic." The exam-safe definition is that it is a general strategy for guiding subordinate search procedures across many optimisation problems.

exp(-Delta/T); high temperature allows more exploration, cooling reduces it.Slide Detail Check: Memory and Acceptance Variants

- Tabu list: stores recent moves or attributes so the search does not immediately undo itself.

- Forbidding strategy: decides what enters the tabu list; freeing strategy decides when a tabu item leaves it.

- Aspiration: can allow a tabu move if it is good enough, for example if it beats the best solution found so far.

- Intensification searches more around promising regions; diversification pushes the search into less-used regions.

- Other move acceptance rules: threshold accepting, Great Deluge, late acceptance, and record-to-record travel all relax strict improving-only search in different ways.

- SA tuning: initial temperature, cooling schedule, stopping rule, and reheating affect how long the search explores before becoming stricter.

Temperature changes how brave the search is

The same worse move is easier to accept when temperature is high. As cooling continues, the acceptance gate narrows.

exp(-5 / 10) ~= 0.607 accepts more often than exp(-5 / 2) ~= 0.082.

Worked Trace: SA Accept or Reject

- Minimisation current solution is

x=5, sof(5)=(5-3)^2=4. - Candidate solution is

x=6, sof(6)=(6-3)^2=9. Delta = 9 - 4 = 5, so the candidate is worse.- At

T = 10,P = exp(-5/10) = exp(-0.5) ~= 0.607. - If the random draw is 0.3, accept the worse move; if the draw is 0.9, reject it.

if candidate is better:

accept

else if random() < exp(-Delta / T):

accept worse move

else rejectBetter moves are easy: accept them.

Worse moves require the probability calculation.

Compare the probability with the random draw.

Cooling makes this forgiveness fade over time.

Which method is most directly linked to a list of forbidden recent moves?

Metaheuristics Crossword

Escape mechanisms and acceptance terms.

Across

Down

Evolutionary and Genetic Algorithms

Genetic algorithms are exam-friendly because the loop is stable: initialise a population, score it, select parents, vary offspring, and replace individuals.

Permutation warning

For TSP, ordinary binary-style crossover can duplicate a city or drop one. Use an order-aware operator such as OX when the route is a permutation.

Learning Targets

- Write genetic algorithm pseudocode with the right operators.

- Compute roulette-wheel selection probabilities from fitness values.

- Explain why representation changes the crossover and mutation operators.

- Place memetic algorithms, evolution strategies, and genetic programming in the evolutionary-method family.

Concept Explanation

A population holds many candidate solutions. Selection biases reproduction toward fitter individuals. Crossover recombines parents. Mutation adds small random variation. Replacement decides who survives into the next generation.

A memetic algorithm adds local improvement to individuals, usually after crossover or mutation. In exam terms: GA gives broad exploration; local search sharpens candidates.

GA Pseudocode Must Name the Operators

- Initialise a population using the representation for the problem.

- Evaluate each individual with the fitness function.

- Select parents, for example by roulette wheel, tournament, or rank-based selection.

- Apply crossover and mutation that preserve the representation, such as bit-flip mutation for binary strings or order-aware crossover for TSP routes.

- Choose replacement and stopping rules, such as generation limit, time limit, or no improvement.

Crossover is controlled mixing, not random copying

The cut point decides which parent contributes each segment. Mutation then changes a small part so the population does not collapse too quickly.

Worked Example: Roulette Selection

- Fitness values are A=10, B=30, C=60. Total fitness is 100.

- Selection probabilities are A=0.10, B=0.30, C=0.60.

- A random number 0.25 selects B because it lies after A's 0.10 slice and within B's cumulative 0.40 boundary.

- This does not guarantee C is always chosen; it only makes C more likely.

Slide Detail Check: Evolutionary Variants

- Evolution strategies are evolutionary methods that often emphasise mutation and strategy parameters as part of search control.

- Memetic algorithms combine population search with local search; the local search improves individuals before they compete or survive.

- Multimeme memetic algorithms allow different local-improvement methods, or memes, to be selected or adapted during the run.

- Genetic programming evolves program-like structures such as expressions, rules, or decision trees; in this course it also connects to generation hyper-heuristics.

- Replacement policy matters: generational replacement swaps most of the population, while steady-state replacement changes only part of it.

- Selection pressure changes with roulette-wheel, tournament, rank, truncation, or Boltzmann selection; too much pressure can cause premature convergence.

population = initialise()

while not stopping_condition:

parents = select(population)

offspring = crossover_and_mutate(parents)

population = replace(population, offspring)Name the representation before naming the operator.

Selection uses fitness; crossover and mutation create variation.

Replacement controls convergence and diversity.

Elitism keeps the best candidates from being lost.

Why use order crossover for TSP routes?

Genetic Algorithms Crossword

Operators and population terms.

Across

Down

Hyper-Heuristics and Timetabling Applications

A hyper-heuristic searches over heuristics rather than directly searching only over solutions. That distinction is small in wording but big in marks.

Timetabling model

exam = vertex, conflict = edge, timeslot = colour

A valid timetable gives adjacent vertices different colours.

Learning Targets

- Distinguish metaheuristics from hyper-heuristics.

- Classify selection vs generation and online vs offline learning.

- Model exam timetabling as graph colouring.

- Recognise HyFlex, LD/SD graph-colouring choices, and application details from the hyper-heuristic slides.

Concept Explanation

A selection hyper-heuristic chooses among existing low-level heuristics. A generation hyper-heuristic creates new heuristics. A no-learning system follows a fixed rule, an online system learns while solving, and an offline system learns before being used.

For timetabling, graph colouring gives the cleanest exam explanation. Vertices are exams. Edges mean two exams clash because at least one student takes both. Colours are timeslots.

Timetabling as graph colouring

Conflicting exams are connected by edges. Slot colours are shown in the legend; exams may share a colour only when no edge connects them.

Worked Example: Exam Timetabling

- Make one vertex for each exam: AI, Databases, Networks, Maths.

- Add an edge between two exams if a student is enrolled in both.

- Assign colours as timeslots so connected exams never share a colour.

- A low-level heuristic might choose the next vertex by largest degree or saturation degree.

- A selection hyper-heuristic decides which low-level heuristic to use next.

Slide Detail Check: Hyper-Heuristic Variants

- No-learning hyper-heuristics use fixed choice rules; learning hyper-heuristics adapt their heuristic choices from feedback.

- Random permutation applies low-level heuristics in a shuffled order; random permutation gradient keeps using an improving heuristic before moving on.

- Gradient selection rewards heuristics that improve the solution and can reduce use of unhelpful ones.

- HyFlex separates a general high-level search method from domain-specific low-level heuristics, which is why it supports cross-domain comparison.

- LD means largest degree and SD means saturation degree in graph-colouring style timetabling.

- Chromatic number is the minimum number of colours needed; k-colouring asks whether the graph can be coloured with k colours.

- Policy matrices, tensor selection, and apprenticeship learning are application-level ways to learn or organise heuristic choices rather than hand-picking every move.

- Bin-packing applications distinguish online bin packing from offline bin packing and use heuristics such as Next-Fit, First-Fit, Best-Fit, and Best-Fit Decreasing.

while problem is unfinished:

h = choose_low_level_heuristic(state)

state = apply(h, state)

update_feedback(h, result)The high-level method does not directly pick a solution move.

It picks which heuristic gets to act.

Feedback can reward or penalise that choice.

The goal is reusable control across related problem instances.

What does a selection hyper-heuristic select?

Hyper-Heuristics Crossword

Control-layer vocabulary and graph-colouring terms.

Across

Down

Knowledge Representation, Reasoning, LLMs, and Chain of Thought

The exam can mix classic KR&R with newer LLM and chain-of-thought questions. Keep the answer grounded: define the representation, explain the reasoning process, then state the limitation.

Exam Caveat

LLMs and chain-of-thought show up in the newer papers, but the slides give search and optimisation much more room. Keep these answers compact: what it is, one mechanism, and one limitation.

Learning Targets

- Compare declarative, procedural, semantic, and heuristic knowledge.

- Explain syntax vs semantics in logic-based representation.

- Write concise answers on CBR, LLMs, and chain-of-thought prompting.

- Recognise production rules, frames, conceptual graphs, DSS, learning agents, and decision-tree exam prompts.

Concept Explanation

Symbolic AI uses explicit symbols and rules, such as logic, frames, production rules, and case libraries. Non-symbolic AI uses distributed numeric representations, such as neural networks. A good answer says what is represented and how inference or retrieval works.

For LLMs, mention transformer-style sequence modelling and self-attention at a high level. For chain-of-thought, say it encourages intermediate reasoning steps, but can still produce wrong or unfaithful explanations.

Two ways to represent knowledge

Symbolic systems name the relation directly. LLM-style representations link tokens with learned attention weights, which is useful but not the same as an explicit logic fact.

explicit fact: Has(Rose, Thorn)

state givens step 2

apply rule answer

chain-of-thought prompting asks for intermediate steps before the final answer; those steps can still be wrong.

Worked Example: CBR Four Rs

- Retrieve a similar past case.

- Reuse the old solution or adapt it.

- Revise the proposed answer after testing or feedback.

- Retain the new solved case for future use.

Slide and Exam Detail Check: KR, Learning, and DSS

- Production rules use IF-THEN rules with a match-resolve-act cycle: match rules to working memory, resolve conflicts, then fire a rule.

- Semantic networks and conceptual graphs represent knowledge as nodes and labelled relations; frames group slots, fillers, defaults, and inheritance.

- First-order logic and Prolog-style rules are symbolic representations: syntax controls legal form, semantics controls truth or meaning.

- Decision support systems combine data, models, and user judgement to support decisions rather than automatically guarantee the best decision.

- Learning agents are older-paper material: performance element acts, learning element improves it, critic gives feedback, and problem generator suggests exploratory actions.

- PEAS describes an agent task environment: performance measure, environment, actuators, and sensors.

- Decision trees split examples by attributes; information gain chooses a split by reducing class uncertainty.

Every rose has a thorn:

forall x, Rose(x) -> exists y, Thorn(y) and Has(x,y)

syntax = allowed formula shape

semantics = truth conditionsThe universal quantifier handles "every".

The existential quantifier handles "has a".

Syntax checks whether the formula is well formed.

Semantics says when the formula is true in a model.

In logic representation, what is the difference between syntax and semantics?

Knowledge Crossword

KR&R terms plus the newer LLM and CoT exam words.

Across

Down

Modelling and Simulation

Simulation questions reward careful classification and clean model components. The safest answers say what the model is for, what it includes, and what assumptions it makes.

Conceptual model checklist

objectives, inputs, outputs, content, assumptions, simplifications

Then check validity, credibility, utility, and feasibility.

Learning Targets

- List conceptual-model components and requirements.

- Classify simulations as static/dynamic, discrete/continuous, deterministic/stochastic.

- Sketch stock-flow logic without confusing stocks and rates.

- Explain how simplification choices such as aggregation, exclusion, and random variables affect a conceptual model.

Concept Explanation

A conceptual model is the bridge between the real situation and the implemented simulation. It says what is inside the boundary, what is ignored, and which assumptions are acceptable for the decision being supported.

Discrete-event simulation changes state at event times. System dynamics uses stocks, flows, and feedback loops. Agent-based modelling simulates individual agents and their interactions.

Stock grows when inflow beats outflow

The box is the accumulated queue. Here inflow is 8 jobs per day and outflow is 5 jobs per day, so the stock rises by 3 jobs per day.

Worked Example: Stock and Flow

- Stock: number of students currently waiting for feedback.

- Inflow: new submissions arriving per day, for example 8 per day.

- Outflow: marked submissions completed per day, for example 5 per day.

- Net change is

8 - 5 = +3per day, so the stock grows. - The model may simplify marker availability and student behaviour; say so explicitly.

Slide Detail Check: Building a Simpler Model

- 80/20 rule: keep the small set of model features that explain most of the behaviour needed for the decision.

- Aggregation: combine similar entities or states when individual detail is not needed.

- Exclusion: leave out detail that does not affect the decision question enough to justify the extra complexity.

- Random variables: represent uncertain inputs such as arrivals, service times, or demand.

- Rule reduction: simplify detailed operating rules into a smaller rule set that still matches the model purpose.

- Split models: separate a large model into connected submodels when one giant model would be too hard to build or validate.

- M/M/1 queue: a one-server queue model with random arrivals and service is a classic example for connecting queues, events, and waiting-time outputs.

- Traffic simulation: examples such as VISSIM show why simulation is useful when real-world experiments would be expensive, disruptive, or unsafe.

queue model:

dynamic because state changes over time

discrete because arrivals/services are events

stochastic if arrival or service times are randomDynamic means time matters.

Discrete means state changes at separate events or steps.

Stochastic means randomness affects outcomes.

Tie each label to a feature of the model.

In a system dynamics diagram, what is a stock?

Simulation Crossword

Model-quality and simulation-classification terms.

Across

Down

AIM Exam Practice

Use this as the final pass: definitions, traces, calculations, and the exam caveats worth remembering.

Main Revision List

Start with local search setup, SA acceptance, GA operators, hyper-heuristic classification, KR&R definitions, conceptual modelling, simulation classification, and short LLM/CoT answers.

Learning Targets

- Turn a vague AIM question into a structured answer quickly.

- Spot the marking words: define, compare, trace, compute, classify, discuss.

- Use exam caveats without turning them into waffle.

Exam-Focus Map

Trace: hill climbing, SA, GA selection, stack/stock-flow style model logic. Compare: exact vs heuristic, local search vs metaheuristic, metaheuristic vs hyper-heuristic, symbolic vs non-symbolic. Define: neighbourhood, objective, fitness, syntax, semantics, validity, stochastic.

Learning agents and decision trees show up in older papers. Know the definitions, then put most of your time into the topics that repeat more often: search, GA, hyper-heuristics, KR&R, LLM/CoT, and simulation.

Answer Templates

- Search setup: representation, initial solution, evaluation, neighbourhood, acceptance rule, stopping condition.

- Compare methods: state the shared goal, give the mechanism difference, then one strength and one limitation.

- Calculation: write the formula, substitute values, compare with the threshold or random draw, conclude.

- Simulation: purpose, boundary, assumptions, classification, validation concern.

Define LLM as sequence model trained on large text corpora.

Mention transformer/self-attention at a high level.

Explain prediction of next tokens and emergent task behaviour.

Add limitation: hallucination, bias, weak grounding, or opaque reasoning.It defines the method before discussing behaviour.

It uses one technical mechanism without drowning in detail.

It gives the examiner a limitation mark.

It does not claim more detail than the notes give.

Older-Paper Topics Still Need a Definition

- Learning agent: performance element chooses actions, learning element improves behaviour, critic evaluates performance, and problem generator encourages useful exploration.

- PEAS: performance measure, environment, actuators, and sensors. Use it to specify the task environment before describing the agent.

- Decision tree: a tree classifier that tests attributes at internal nodes and predicts a class at leaves.

- Information gain: the split score based on how much an attribute reduces uncertainty about the class labels.

- Exam caveat: older papers use these topics more directly; the newer pattern leans toward search, GA, hyper-heuristics, KR&R, LLM/CoT, and simulation.

What is the strongest first move in a compare question?

Exam Practice Crossword

Final-pass terms for answer planning.